|

There are some small bugs with some parts like option 3 will fail because it tries to handle a number bigger than 32-bit limit, and I'm sure there is some more issues, but the general timings I think are evident unless I really messed up on my logic. So the question is which one of these is the fastest / should be fastest, and if there are any other fast(er) ways to get size of folder contents that has 100k files (and there are 100s of folders)īelow is my very hacky way of doing the comparison (butchered my program to see some outputs) Now with exception of #3 both of those ways seem way too slow for what I need (100s of thousands of files). Into a text file, and then read in the size at the bottomģ.The last way I am trying right now is using du (disk utility tool from MS - ).

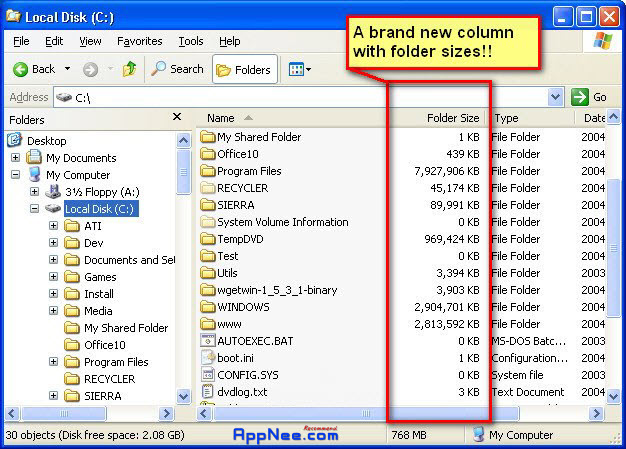

PLEASE SEE BELOW THE ORIGINAL QUESTION FOR SOME TEST COMPARISONS OF DIFFERENT WAYS:ġ.Iterate through directory using the code from Get Folder Size from Windows Command Line : offįor /r %%x in (folder\*) do set /a size =%%~zx

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed